Intel has reportedly integrated its Gaudi 3 rack-scale solution with NVIDIA’s technology stack, utilizing its own AI chips combined with Blackwell to deliver impressive performance upgrades.

Intel Showcases a Hybrid AI Server Featuring NVIDIA’s Blackwell Technology Onboard; Claims to Offer Impressive Performance

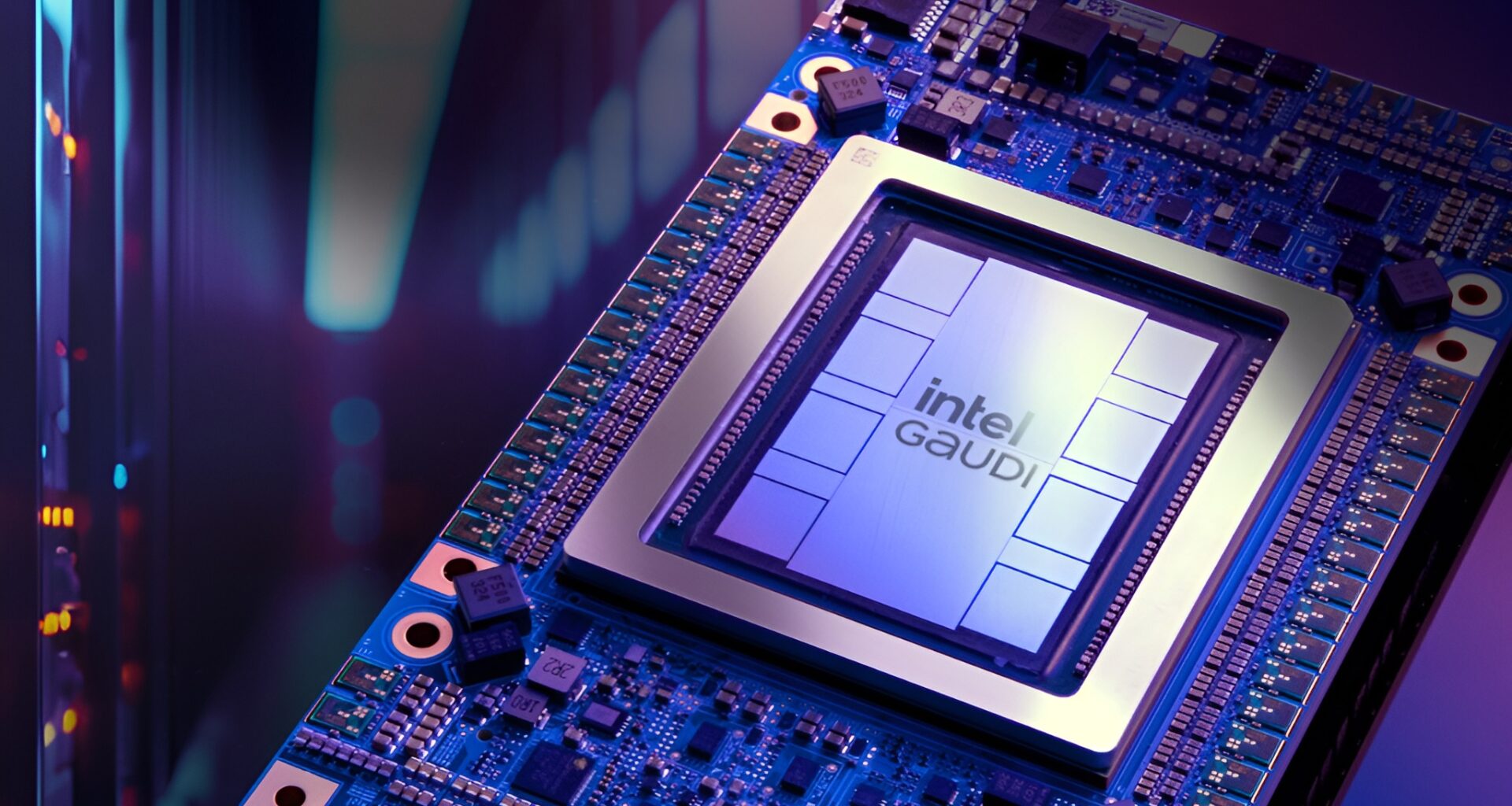

We are all aware that Intel’s AI chips, particularly the Gaudi lineup, have seen significant industry adoption. The firm has been in a tough spot relative to competitors like NVIDIA and AMD in terms of revenue from the AI market, and now, Intel seems to be finding a new way to sell its Gaudi platform. According to SemiAnalysis, Intel plans to offer its customers a new Gaudi 3 rack-scale system, which will feature NVIDIA’s Blackwell B200 GPU in a hybrid configuration, along with Connect-X networking.

Intel just took another step on combining forces 🔥 with NVIDIA by integrating their new Gaudi3 rack scale systems together with NVIDIA B200 via disaggregated PD inferencing. Intel claims that compared their B200 only baseline, and inferencing system using Gaudi3 for decode part… pic.twitter.com/jAKin6rgZx

— SemiAnalysis (@SemiAnalysis_) October 18, 2025

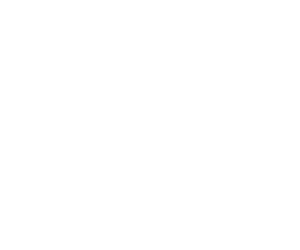

It appears that this was one of the notable announcements at the OCP Global Summit, where Team Blue plans to capitalize on the rack-scale AI segment uniquely. Let’s discuss how the system could unfold. It is a unique implementation, where Intel’s Gaudi 3 AI chips would target the ‘decode’ portion of inferencing workloads, while the B200 will be responsible for the more aggressive ‘prefill’ stages. Blackwell GPUs are known to perform best in large matrix-multiply bursts across the full context, due to the platform’s high-performance nature, which is why assigning prefill workloads is the right move here.

Image Credits: SemiAnalysis

Image Credits: SemiAnalysis

Intel’s Gaudi 3 in this setup will focus more on memory bandwidth and Ethernet-centric scale-out in this rack-scale combination, which is why the setup seems sensible. On the networking side, the rack utilizes NVIDIA’s ConnectX-7 400 GbE NICs on the compute trays and Broadcom’s Tomahawk 5 51.2 Tb/s switches at the rack scale to ensure all-to-all connectivity. SemiAnalysis says that the compute tray features two Xeon CPUs, four Gaudi 3 AI chips, and four NICs, along with 1x NVIDIA BlueField-3 DPU, and there are a total of sixteen trays per rack.

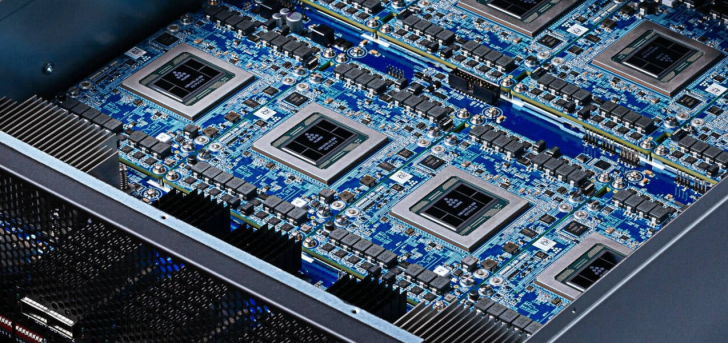

Intel’s Gaudi 2 rack

Intel’s Gaudi 2 rack

The Gaudi platform offers itself as a cost-efficient decode engine in an ecosystem dominated by NVIDIA, so the approach here is that “if you cannot beat them, join them,” to say the least. It is claimed that this rack-scale setup achieves 1.7x faster prefill performance compared to a B200-only baseline on small, dense models; however, this performance claim has yet to be independently tested. This approach works well for Intel, as they can now monetize the Gaudi platform by bundling it into a rack-scale system. For NVIDIA, this means they now know their networking capabilities are top-notch.

While this hybrid setup sounds optimistic, the Gaudi AI platform still has an immature software stack, which will limit its adoption. Since the Gaudi architecture is set to phase out in a few months, we doubt that this rack-scale configuration will reach the same level of mainstream adoption as alternatives.

Follow Wccftech on Google or add us as a preferred source, to get our news coverage and reviews in your feeds.